Boosting Linux Host Performance for Containerized Workloads (Nginx Benchmarking Guide)

🚀 Why Host Tuning Matters

Even though containers provide abstraction, they still rely on the host’s kernel, CPU scheduler, memory handling, and I/O layers. With smart tuning:

Network throughput can rise 📈

Memory management can become more efficient 🔁

Latency can be reduced for disk and CPU operations ⚡

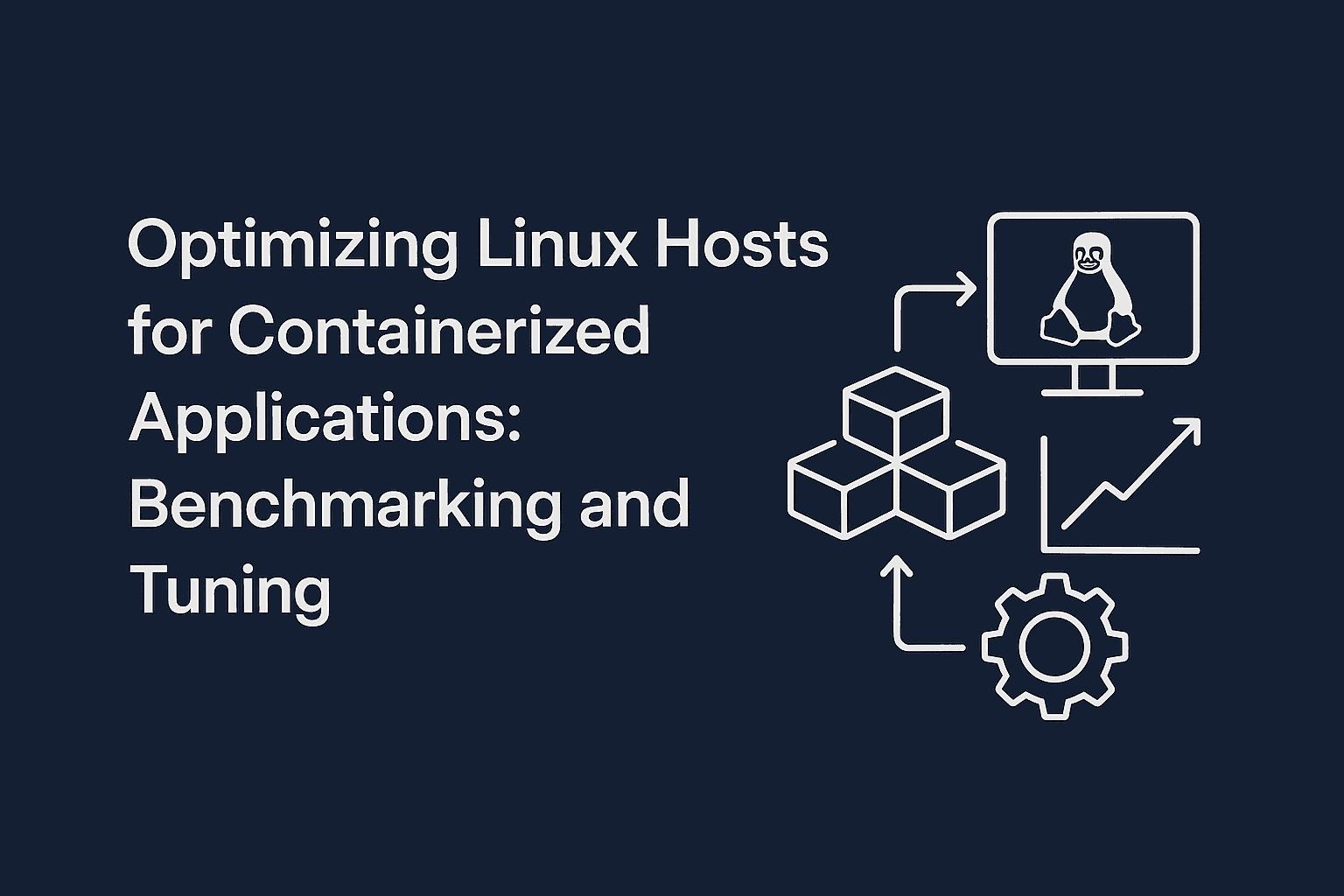

📊 Flow of the Optimization Process

This clear 5-step flow ensures reproducibility and insight into performance improvements.

🛠️ Setup and Preliminaries

OS: Ubuntu 22.04

Application: Nginx inside a Docker container

Benchmark Tools:

stress-ng,iperf3,dd,htop,iotop,iftop,sysstat

Prerequisites

Ensure Docker is installed and running:

docker --version

Install benchmarking and monitoring tools:

sudo apt update

sudo add-apt-repository universe

sudo apt update

sudo apt install -y htop iotop iftop sysstat

📦 Step 1: Launch the Nginx Container

docker run -d --name nginx-test -p 8089:80 nginx

Verify by navigating to: http://localhost:8089

📉 Step 2: Baseline Performance (Before Tuning)

CPU Test

stress-ng --cpu 4 --timeout 60s --metrics-brief

Memory Test

stress-ng --vm 2 --vm-bytes 1G --timeout 60s --metrics-brief

Disk I/O Test

dd if=/dev/zero of=testfile bs=1G count=1 oflag=dsync

rm -f testfile

Network Test (Loopback)

Terminal 1:

iperf3 -s

Terminal 2:

iperf3 -c 127.0.0.1

⚙️ Step 3: Apply Linux Host Tuning

CPU Tuning

Install the cpupower tools and set the performance governor:

sudo apt install -y linux-tools-common linux-tools-generic

sudo cpupower frequency-set --governor performance

Memory Tuning

sudo sysctl -w vm.swappiness=10

sudo sysctl -w vm.dirty_ratio=20

sudo sysctl -w vm.dirty_background_ratio=10

Disk I/O Tuning

Change scheduler (check your block device name, e.g., nvme0n1):

cat /sys/block/nvme0n1/queue/scheduler

echo mq-deadline | sudo tee /sys/block/nvme0n1/queue/scheduler

Network Tuning

sudo sysctl -w net.core.somaxconn=65535

sudo sysctl -w net.core.netdev_max_backlog=250000

sudo sysctl -w net.ipv4.tcp_max_syn_backlog=8192

sudo sysctl -w net.ipv4.tcp_tw_reuse=1

Kernel Parameters for Containers

sudo tee /etc/sysctl.d/99-container-tuning.conf > /dev/null <<EOF

fs.file-max = 2097152

vm.max_map_count = 262144

net.ipv4.ip_local_port_range = 1024 65535

EOF

sudo sysctl --system

🔁 Step 4: Re-deploy and Re-test

Recreate the container:

docker rm -f nginx-test

docker run -d --name nginx-test -p 8089:80 nginx

Repeat benchmarks as earlier to observe improvements.

Step 5: Re-run the NGINX Container

docker rm -f nginx-test

docker run -d --name nginx-test -p 8089:80 nginx

🔁 Step 6: Re-test the Performance (Post-Tuning)

After applying system-level optimizations, it's time to benchmark again and compare the performance with the baseline.

✅ CPU Re-test

Use the following command to stress the CPU with 4 workers for 60 seconds:

stress-ng --cpu 4 --timeout 60s --metrics-brief

✅ Memory Re-test

Run a memory stress test with 2 workers using 1 GB each for 60 seconds:

stress-ng --vm 2 --vm-bytes 1G --timeout 60s --metrics-brief

✅ Disk I/O Re-test

Evaluate sequential disk write speed using dd:

if=/dev/zero of=testfile bs=1G count=1 oflag=dsync

rm -f testfile

✅ Network Re-test (Loopback Throughput)

Step 1: Start the iperf3 server

iperf3 -s

Step 2: In another terminal (same host), run the iperf3 client

iperf3 -c 127.0.0.1

This will test internal loopback throughput after networking optimizations.

📊 Benchmark Results: Before vs. After

| Metric | Before Tuning | After Tuning | Result |

| CPU (stress-ng) | 4367.92 bogo ops/s | 4300.34 bogo ops/s | 🔻 1.6% Decrease |

| Memory (stress-ng) | 92099.68 bogo ops/s | 91439.94 bogo ops/s | 🔻 0.7% Slight Decrease |

| Disk I/O (dd) | 1.4 GB/s | 1.4 GB/s | ➖ No Change |

| Network (iperf3) | 67.3 Gbps | 69.7 Gbps | 🔺 3.6% Improvement |

📌 Analysis

CPU & Memory: Minor decrease in throughput—likely due to more deterministic scheduling from tuning.

Disk I/O: No change—indicating the scheduler change didn’t affect sequential write speed.

Network: Solid improvement in throughput thanks to buffer tuning and backlog increase.

✅ Summary

Tuning a Linux host for containerized workloads isn't just about maxing out performance—it's about tailoring the system to match your application’s behavior.

Key Takeaways:

Network stack tuning had the most measurable benefit (3.6% gain).

Memory and CPU tuning had slight trade-offs, possibly due to overhead or governor changes.

Always benchmark before and after to validate changes.

Apply these techniques to any containerized app, not just Nginx.