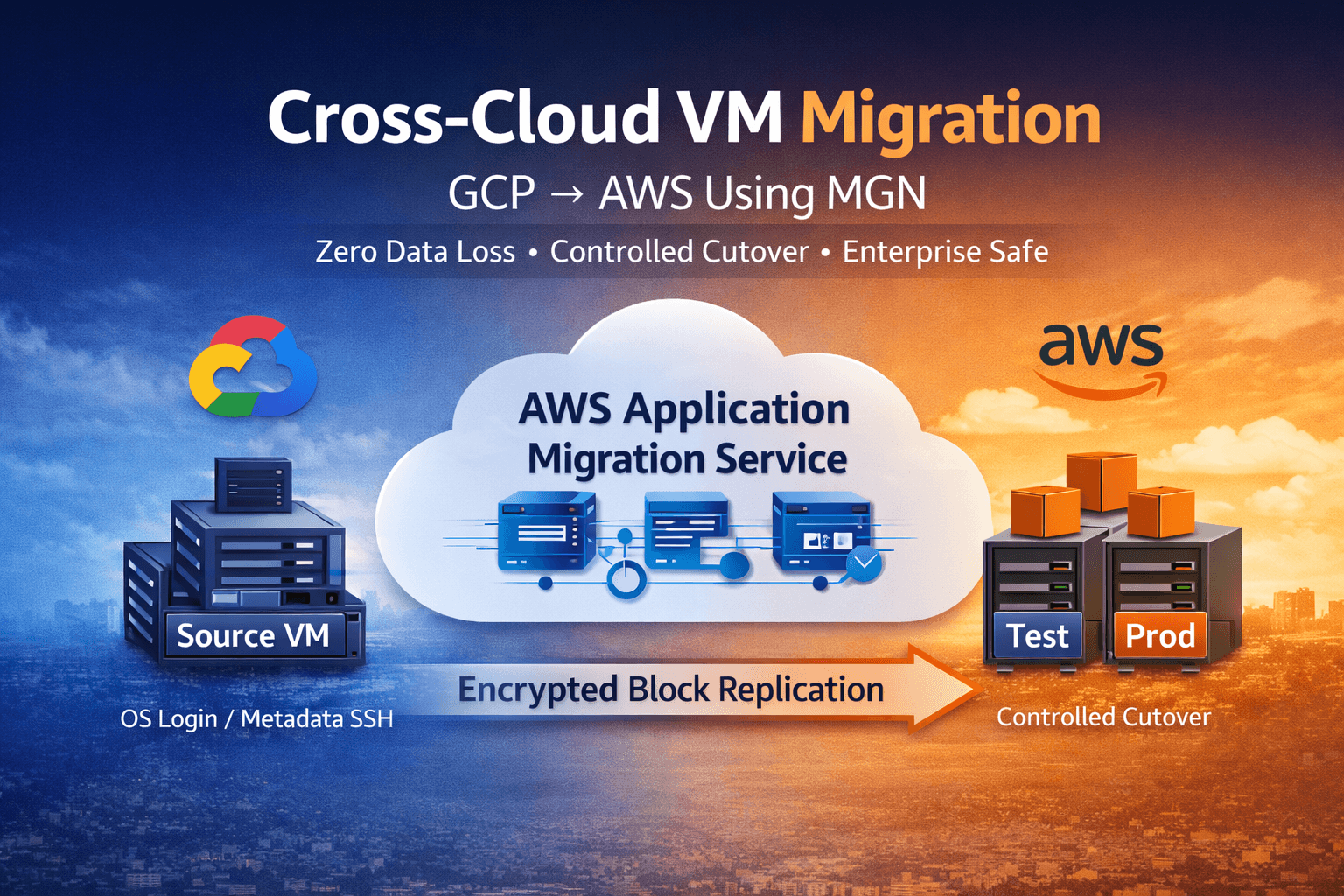

Production-Grade GCS to S3 Migration: Secure, Private, and Zero-Egress Architecture

Migrating object storage across cloud providers is not a copy task.

It is a cost, network, and security boundary problem.

We migrated 10+ TB of object data from Google Cloud Storage to Amazon S3 under strict enterprise constraints:

Zero data loss

No public exposure

No long-lived credentials

Private networking compatibility

Full audit traceability

Controlled and predictable cost

This document describes the architecture and execution model validated for production use.

1. The Engineering Constraint

In cross-cloud migration, the execution location determines financial and security risk.

If migration runs outside GCP:

GCS internet egress charges apply

Traffic traverses public endpoints

Credential exposure surface increases

Cost becomes unpredictable at scale

At a multi-terabyte volume, this is unacceptable.

The objective was clear:

Eliminate public egress from GCS while maintaining integrity and operational control.

2. Architectural Design

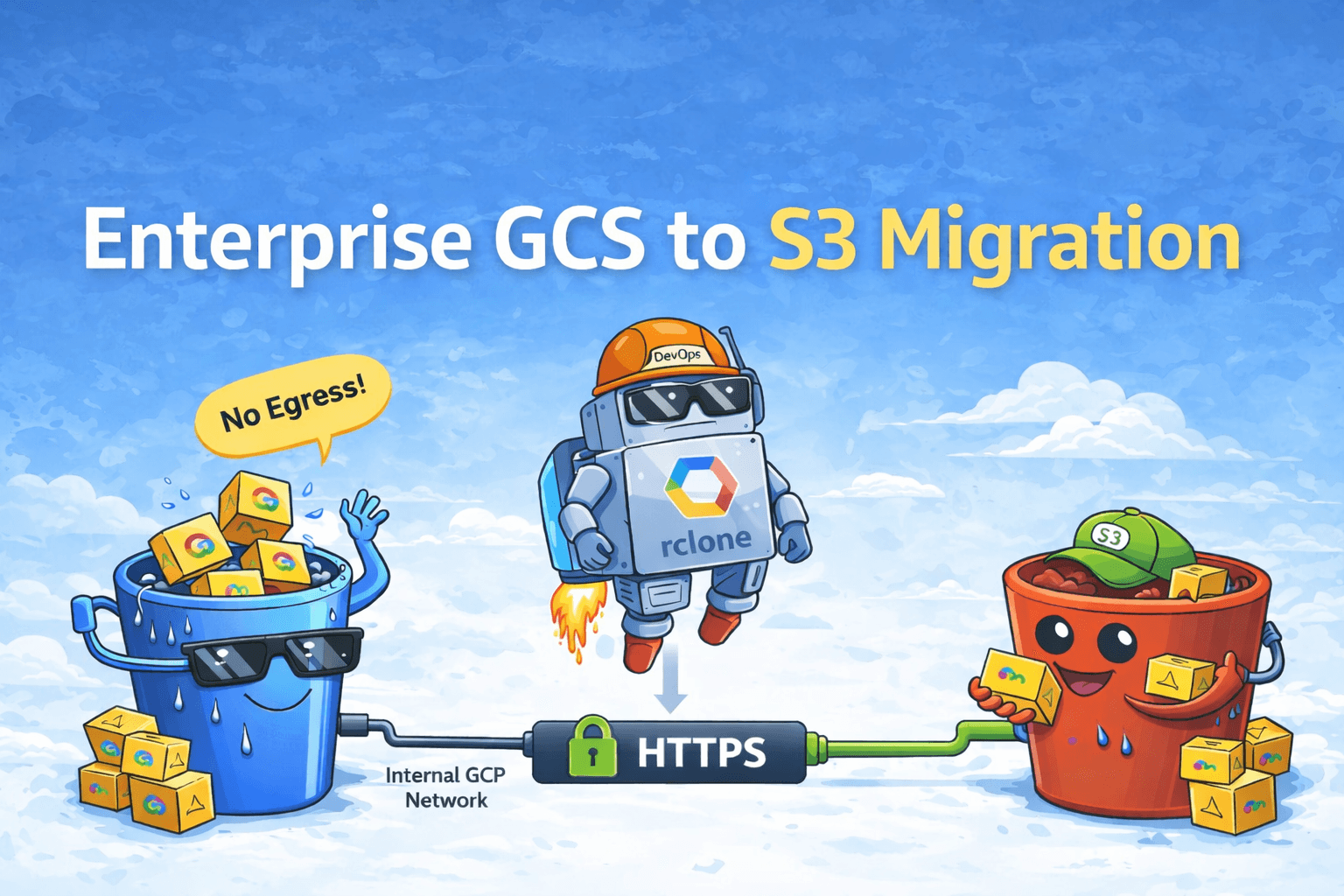

Migration was executed inside GCP using rclone on a Google Compute Engine (GCE) VM.

Data Flow

GCS Bucket

↓

GCE VM (rclone)

↓

HTTPS

↓

S3 Bucket

Impact of this design:

GCS → VM traffic remains internal

No GCS public egress billing

AWS inbound transfer remains free

Execution remains fully controlled

The primary cost risk was removed architecturally, not operationally.

3. Deployment Modes Validated

The design was tested under:

Component | Supported |

|---|---|

Public GCP VM | Yes |

Private GCP VM (No External IP) | Yes |

Public S3 Endpoint | Yes |

S3 via VPC Endpoint | Yes |

VPN / Interconnect | Yes |

This ensures compatibility with both PoC and hardened enterprise environments.

4. Network & Security Model

GCP Side

Private VM (no external IP)

Private Google Access enabled

Cloud NAT or VPN for outbound traffic

No inbound exposure

Metadata-based IAM authentication

No service account JSON keys were used.

Access to GCS was restricted to the VM-attached service account.

AWS Side

Two supported access patterns:

Public S3 (PoC only)

Standard endpoint with IAM control.

Private S3 (Production)

S3 Gateway VPC Endpoint

Bucket policy restricted using

aws:SourceVpceNo public S3 exposure

Example condition:

{

"Condition": {

"StringEquals": {

"aws:SourceVpce": "vpce-xxxxxxxx"

}

}

}

Traffic remains private across environments.

5. Authentication Strategy

GCS

[gcs]

type = google cloud storage

env_auth = true

VM-attached service account

Metadata server authentication

No static credential storage

AWS

PoC: Temporary access key

Production: IAM Role / STS

No long-lived credentials were introduced.

6. Migration Execution Model

Execution followed controlled phases.

Phase 1 — Pre-Flight Validation

IAM verification

DNS resolution check

Connectivity confirmation

Source and destination listing

Mandatory dry-run:

rclone copy gcs:<bucket> s3:<bucket> \

--dry-run --checksum

No migration proceeded without validation.

Phase 2 — Controlled Transfer

rclone copy gcs:<bucket> s3:<bucket> \

--checksum \

--fast-list \

--transfers=8 \

--checkers=8 \

--progress

Controls enforced:

Checksum validation

Resume-safe execution

Tuned concurrency

Encrypted HTTPS transport

Concurrency was deliberately limited to prevent throttling.

Phase 3 — Integrity Verification

rclone check gcs:<bucket> s3:<bucket>

This ensured:

No checksum mismatches

No partial transfers

No silent corruption

Logs and artifacts were archived for audit compliance.

7. Cost Model (5 TB Reference)

Component | Cost |

|---|---|

GCS Egress | \(0 |

GCP VM | Minimal runtime cost |

Cloud NAT | Predictable usage cost |

AWS Transfer IN | \)0 |

rclone | Free |

Total | Infrastructure-level only |

If executed externally, GCS internet egress alone would exceed this amount.

Cost predictability was achieved through architectural control.

8. Alternatives Considered

Managed services were evaluated.

Observed trade-offs:

Per-GB transfer charges

Reduced retry visibility

Limited private networking control

Additional service dependency

For small migrations, managed services are acceptable.

For enterprise-scale workloads requiring cost governance and auditability, direct execution provides stronger guarantees.

9. Outcome

Zero data loss

No public exposure

IAM-based authentication

Private networking compatibility

Full checksum validation

Resume-safe execution

Audit-ready logs

Production approval

10. Conclusion

Cross-cloud storage migration is not about moving objects.

It is about defining the correct execution boundary.

By executing the migration inside GCP, we eliminated public egress cost, preserved private networking, reduced credential risk, and maintained deterministic control over the entire process.

When execution placement is correct, migration risk becomes controlled, cost becomes predictable, and integrity becomes measurable.